Exploratory Data Analysis – All Blog Posts on The Chemical Statistician

December 11, 2014 Leave a comment

This series of posts introduced various methods of exploratory data analysis, providing theoretical backgrounds and practical examples. Fully commented and readily usable R scripts are available for all topics for you to copy and paste for your own analysis! Most of these posts involve data visualization and plotting, and I include a lot of detail and comments on how to invoke specific plotting commands in R in these examples.

I will return to this blog post to add new links as I write more tutorials.

Useful R Functions for Exploring a Data Frame

The 5-Number Summary – Two Different Methods in R

Conceptual Foundations of Histograms – Illustrated with New York’s Ozone Pollution Data

Quantile-Quantile Plots for New York’s Ozone Pollution Data

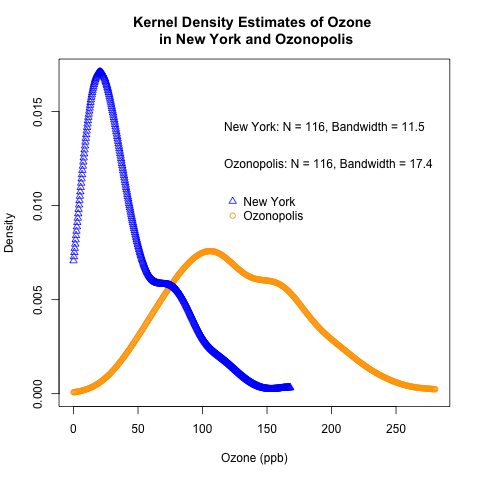

Kernel Density Estimation and Rug Plots in R on Ozone Data in New York and Ozonopolis

2 Ways of Plotting Empirical Cumulative Distribution Functions in R

Conceptual Foundations of Empirical Cumulative Distribution Functions

Combining Box Plots and Kernel Density Plots into Violin Plots for Ozone Pollution Data

Kernel Density Estimation – Conceptual Foundations

Variations of Box Plots in R for Ozone Concentrations in New York City and Ozonopolis

Computing Descriptive Statistics in R for Data on Ozone Pollution in New York City

How to Get the Frequency Table of a Categorical Variable as a Data Frame in R

The advantages of using count() to get N-way frequency tables as data frames in R

Recent Comments